篇首语:本文由编程笔记#小编为大家整理,主要介绍了最纯粹的直播技术实战03-通过filter进行旋转及卡顿修复相关的知识,希望对你有一定的参考价值。

这个系列的文章将会研究最纯粹的android直播的实现,而且不是用现在的集成SDK来达到直播的技术实现,而是从一个比较底层的直播实现来探讨这个技术,这样子对于直播技术的实现,现成的一些直播框架等都有一个比较好的理解。

上一篇文章把Camera的处理以及推流给实现了,但还留下了几个bug,这一篇文章就把一些bug处理一下,主要处理两个bug

如果没有看过之前的文章的可以戳这里

首先,先把画面颠倒的问题解决先,颠倒的话,我们可以通过多种方式完成,比如说从Camera里面获取到的NV21数据进行一个旋转的操作也可以,但这里,使用FFmpeg里的filter来完成,顺便学习一下filter的使用

FFmpeg的filter初始化起来非常的复杂,但初始化完成后,使用就非常的简单了。想要了解filter的强大功能,可以看看官方文档

那么我们需要使用filter,那就需要写一个初始化函数了

/**

* 初始化filter

*/

int init_filters(const char *filters_descr)

/**

* 注册所有AVFilter

*/

avfilter_register_all();

char args[512];

int ret = 0;

AVFilter *buffersrc = avfilter_get_by_name("buffer");

AVFilter *buffersink = avfilter_get_by_name("buffersink");

AVFilterInOut *outputs = avfilter_inout_alloc();

AVFilterInOut *inputs = avfilter_inout_alloc();

enum AVPixelFormat pix_fmts[] = AV_PIX_FMT_YUV420P, AV_PIX_FMT_NONE ;

//为FilterGraph分配内存

filter_graph = avfilter_graph_alloc();

if (!outputs || !inputs || !filter_graph)

ret = AVERROR(ENOMEM);

goto end;

/**

* 要填入正确的参数

*/

snprintf(args, sizeof(args),

"video_size=%dx%d:pix_fmt=%d:time_base=%d/%d:pixel_aspect=%d/%d",

src_width, src_height, pCodecCtx->pix_fmt,

pCodecCtx->time_base.num, pCodecCtx->time_base.den,

pCodecCtx->sample_aspect_ratio.num, pCodecCtx->sample_aspect_ratio.den);

//创建并向FilterGraph中添加一个Filter

ret = avfilter_graph_create_filter(&buffersrc_ctx, buffersrc, "in", args, NULL, filter_graph);

if (ret <0)

LOGE("Cannot create buffer source\\n");

goto end;

//创建并向FilterGraph中添加一个Filter

ret &#61; avfilter_graph_create_filter(&buffersink_ctx, buffersink, "out", NULL, NULL, filter_graph);

if (ret <0)

LOGE("Cannot create buffer sink\\n");

goto end;

ret &#61; av_opt_set_int_list(buffersink_ctx, "pix_fmts", pix_fmts, AV_PIX_FMT_NONE, AV_OPT_SEARCH_CHILDREN);

if (ret <0)

LOGE("Cannot set output pixel format\\n");

goto end;

outputs->name &#61; av_strdup("in");

outputs->filter_ctx &#61; buffersrc_ctx;

outputs->pad_idx &#61; 0;

outputs->next &#61; NULL;

inputs->name &#61; av_strdup("out");

inputs->filter_ctx &#61; buffersink_ctx;

inputs->pad_idx &#61; 0;

inputs->next &#61; NULL;

//将一串通过字符串描述的Graph添加到FilterGraph中

if ((ret &#61; avfilter_graph_parse_ptr(filter_graph, filters_descr, &inputs, &outputs, NULL)) <0)

LOGE("parse ptr error\\n");

goto end;

//检查FilterGraph的配置

if ((ret &#61; avfilter_graph_config(filter_graph, NULL)) <0)

LOGE("parse config error\\n");

goto end;

//缓存frame&#xff0c;用来保存filter后的frame

new_frame &#61; av_frame_alloc();

//uint8_t *out_buffer &#61; (uint8_t *) av_malloc(av_image_get_buffer_size(pCodecCtx->pix_fmt, pCodecCtx->width, pCodecCtx->height, 1));

//av_image_fill_arrays(new_frame->data, new_frame->linesize, out_buffer, pCodecCtx->pix_fmt, pCodecCtx->width, pCodecCtx->height, 1);

end:

avfilter_inout_free(&inputs);

avfilter_inout_free(&outputs);

return ret;

可以看到filter的初始化是挺麻烦的&#xff0c;但初始化完成后&#xff0c;只需要调用两个函数就可以很方便的使用了

//向FilterGraph中加入一个AVFrame

ret &#61; av_buffersrc_add_frame(buffersrc_ctx, yuv_frame);

if (ret >&#61; 0)

//从FilterGraph中取出一个AVFrame

ret &#61; av_buffersink_get_frame(buffersink_ctx, new_frame);

if (ret >&#61; 0)

ret &#61; encode(pCodecCtx, &pkt, new_frame, &got_packet);

else

LOGE("Error while getting the filtergraph\\n");

else

LOGE("Error while feeding the filtergraph\\n");

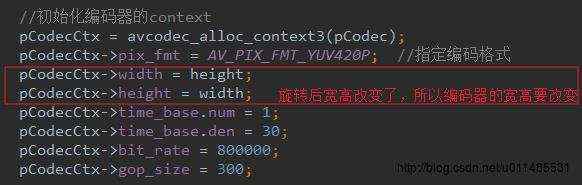

所以初始化麻烦&#xff0c;使用起来就很方便了。但是因为进行的旋转的操作&#xff0c;所以旋转后的frame的width和height就设置了&#xff0c;所以要对编码器的宽高进行修改&#xff0c;不然就无法编码成功

到这里&#xff0c;基本上就可以通过filter来把直播画面颠倒的问题给解决掉了。

那么就可以解决第二个问题就是直播卡顿的问题了&#xff0c;这个问题主要是因为pts/dts的设置问题

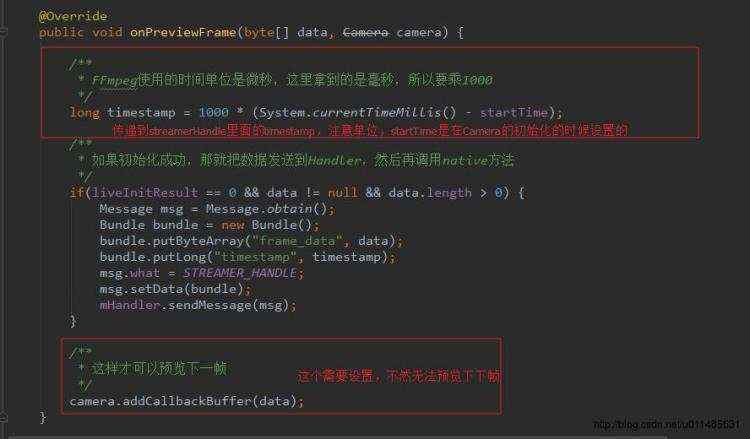

首先&#xff0c;我们要先把streamerHandle这个native方法修改一下&#xff0c;给它再添加一个参数&#xff0c;这个参数是用于设置pts的

/**

* 对每一次预览的数据进行编码推流

* &#64;param data NV21格式的数据

* &#64;param timestamp 用于设置pts

* &#64;return 0成功&#xff0c;小于0失败

*/

private native int streamerHandle(byte[] data, long timestamp);

在LiveActivity里面修改完成后&#xff0c;要刻去更新一下c里面对应的方法&#xff0c;不然就报错了

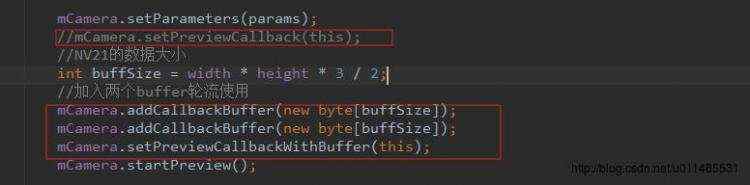

在这里&#xff0c;为了提高性能&#xff0c;我们可以把Camera的setPreviewCallback换成setPreviewCallbackWithBuffer&#xff0c;这样子就可以避免预览的时候&#xff0c;频繁创建byte[]和频繁的GC

那么把LiveActivity写好之后呢&#xff0c;我们就需要去到native层去设置好pts

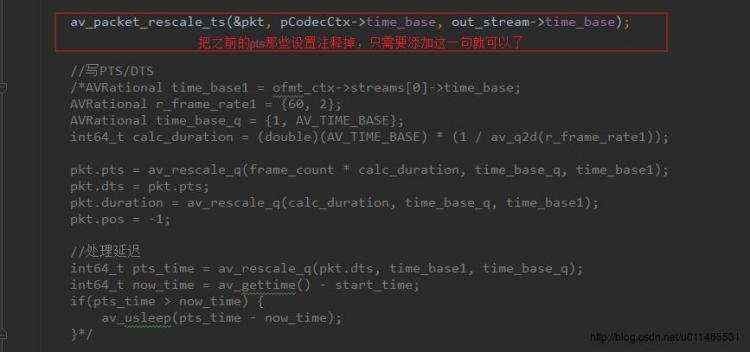

av_packet_rescale_ts这个函数的主要作用就是&#xff1a;将packet中的有效定时字段&#xff08;timestamp/duration&#xff09;从一个time_base转换为另一个time_base

FFmpeg的time_base实际上就是指时间的刻度&#xff0c;

比如说当

time_base为1, 30的时候&#xff0c;如果pts为20&#xff0c;

那么要变成

time_base为1, 1000000刻度时的pts就要进行转换(20 * 1 / 30) / (1 / 1000000)

而且解码器那里有一个

time_base&#xff0c;编码器又有自己的time_base&#xff0c;所以当进行操作后&#xff0c;需要进行一个time_base的转换才行

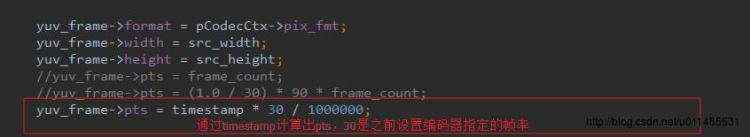

设置完成这个之后&#xff0c;还需要用传递进来的timestamp计算也pts&#xff0c;并设置好

到这里&#xff0c;就基本上可以把直播画面卡顿的问题给解决掉了。

完整的native代码就如下&#xff1a;

//

// Created by Administrator on 2017/2/19.

//

#include

#include

#include

#include "libavcodec/avcodec.h"

#include "libavformat/avformat.h"

#include "libavutil/time.h"

#include "libavutil/imgutils.h"

#include "libavfilter/avfiltergraph.h"

#include "libavfilter/buffersink.h"

#include "libavfilter/buffersrc.h"

#include "libavutil/opt.h"

#define LOG_TAG "FFmpeg"

#define LOGE(format, ...) __android_log_print(ANDROID_LOG_ERROR, LOG_TAG, format, ##__VA_ARGS__)

#define LOGI(format, ...) __android_log_print(ANDROID_LOG_INFO, LOG_TAG, format, ##__VA_ARGS__)

AVFormatContext *ofmt_ctx &#61; NULL;

AVStream *out_stream &#61; NULL;

AVPacket pkt;

AVCodecContext *pCodecCtx &#61; NULL;

AVCodec *pCodec &#61; NULL;

AVFrame *yuv_frame;

int frame_count;

int src_width;

int src_height;

int y_length;

int uv_length;

int64_t start_time;

/**

* 定义filter相关的变量

*/

const char *filter_descr &#61; "transpose&#61;clock"; //顺时针旋转90度的filter描述

AVFilterContext *buffersink_ctx;

AVFilterContext *buffersrc_ctx;

AVFilterGraph *filter_graph;

int filterInitResult;

AVFrame *new_frame;

/**

* 回调函数&#xff0c;用来把FFmpeg的log写到sdcard里面

*/

void live_log(void *ptr, int level, const char* fmt, va_list vl)

FILE *fp &#61; fopen("/sdcard/123/live_log.txt", "a&#43;");

if(fp)

vfprintf(fp, fmt, vl);

fflush(fp);

fclose(fp);

/**

* 编码函数

* avcodec_encode_video2被deprecated后&#xff0c;自己封装的

*/

int encode(AVCodecContext *pCodecCtx, AVPacket* pPkt, AVFrame *pFrame, int *got_packet)

int ret;

*got_packet &#61; 0;

ret &#61; avcodec_send_frame(pCodecCtx, pFrame);

if(ret <0 && ret !&#61; AVERROR_EOF)

return ret;

ret &#61; avcodec_receive_packet(pCodecCtx, pPkt);

if(ret <0 && ret !&#61; AVERROR(EAGAIN))

return ret;

if(ret >&#61; 0)

*got_packet &#61; 1;

return 0;

/**

* 初始化filter

*/

int init_filters(const char *filters_descr)

/**

* 注册所有AVFilter

*/

avfilter_register_all();

char args[512];

int ret &#61; 0;

AVFilter *buffersrc &#61; avfilter_get_by_name("buffer");

AVFilter *buffersink &#61; avfilter_get_by_name("buffersink");

AVFilterInOut *outputs &#61; avfilter_inout_alloc();

AVFilterInOut *inputs &#61; avfilter_inout_alloc();

enum AVPixelFormat pix_fmts[] &#61; AV_PIX_FMT_YUV420P, AV_PIX_FMT_NONE ;

//为FilterGraph分配内存

filter_graph &#61; avfilter_graph_alloc();

if (!outputs || !inputs || !filter_graph)

ret &#61; AVERROR(ENOMEM);

goto end;

/**

* 要填入正确的参数

*/

snprintf(args, sizeof(args),

"video_size&#61;%dx%d:pix_fmt&#61;%d:time_base&#61;%d/%d:pixel_aspect&#61;%d/%d",

src_width, src_height, pCodecCtx->pix_fmt,

pCodecCtx->time_base.num, pCodecCtx->time_base.den,

pCodecCtx->sample_aspect_ratio.num, pCodecCtx->sample_aspect_ratio.den);

//创建并向FilterGraph中添加一个Filter

ret &#61; avfilter_graph_create_filter(&buffersrc_ctx, buffersrc, "in", args, NULL, filter_graph);

if (ret <0)

LOGE("Cannot create buffer source\\n");

goto end;

ret &#61; avfilter_graph_create_filter(&buffersink_ctx, buffersink, "out", NULL, NULL, filter_graph);

if (ret <0)

LOGE("Cannot create buffer sink\\n");

goto end;

ret &#61; av_opt_set_int_list(buffersink_ctx, "pix_fmts", pix_fmts, AV_PIX_FMT_NONE, AV_OPT_SEARCH_CHILDREN);

if (ret <0)

LOGE("Cannot set output pixel format\\n");

goto end;

outputs->name &#61; av_strdup("in");

outputs->filter_ctx &#61; buffersrc_ctx;

outputs->pad_idx &#61; 0;

outputs->next &#61; NULL;

inputs->name &#61; av_strdup("out");

inputs->filter_ctx &#61; buffersink_ctx;

inputs->pad_idx &#61; 0;

inputs->next &#61; NULL;

//将一串通过字符串描述的Graph添加到FilterGraph中

if ((ret &#61; avfilter_graph_parse_ptr(filter_graph, filters_descr, &inputs, &outputs, NULL)) <0)

LOGE("parse ptr error\\n");

goto end;

//检查FilterGraph的配置

if ((ret &#61; avfilter_graph_config(filter_graph, NULL)) <0)

LOGE("parse config error\\n");

goto end;

new_frame &#61; av_frame_alloc();

//uint8_t *out_buffer &#61; (uint8_t *) av_malloc(av_image_get_buffer_size(pCodecCtx->pix_fmt, pCodecCtx->width, pCodecCtx->height, 1));

//av_image_fill_arrays(new_frame->data, new_frame->linesize, out_buffer, pCodecCtx->pix_fmt, pCodecCtx->width, pCodecCtx->height, 1);

end:

avfilter_inout_free(&inputs);

avfilter_inout_free(&outputs);

return ret;

JNIEXPORT jstring JNICALL

Java_com_xiaoxiao_live_MainActivity_helloFromFFmpeg(JNIEnv *env, jobject instance)

// TODO

char info[10000] &#61; 0;

sprintf(info, "%s\\n", avcodec_configuration());

return (*env)->NewStringUTF(env, info);

JNIEXPORT jint JNICALL

Java_com_xiaoxiao_live_LiveActivity_streamerRelease(JNIEnv *env, jobject instance)

// TODO

if(pCodecCtx)

avcodec_close(pCodecCtx);

pCodecCtx &#61; NULL;

if(ofmt_ctx)

avio_close(ofmt_ctx->pb);

if(ofmt_ctx)

avformat_free_context(ofmt_ctx);

ofmt_ctx &#61; NULL;

if(yuv_frame)

av_frame_free(&yuv_frame);

yuv_frame &#61; NULL;

if(filter_graph)

avfilter_graph_free(&filter_graph);

filter_graph &#61; NULL;

if(new_frame)

av_frame_free(&new_frame);

new_frame &#61; NULL;

JNIEXPORT jint JNICALL

Java_com_xiaoxiao_live_LiveActivity_streamerFlush(JNIEnv *env, jobject instance)

// TODO

int ret;

int got_packet;

AVPacket packet;

if(!(pCodec->capabilities & CODEC_CAP_DELAY))

return 0;

while(1)

packet.data &#61; NULL;

packet.size &#61; 0;

av_init_packet(&packet);

ret &#61; encode(pCodecCtx, &packet, NULL, &got_packet);

if(ret <0)

break;

if(!got_packet)

ret &#61; 0;

break;

LOGI("Encode 1 frame size:%d\\n", packet.size);

AVRational time_base &#61; ofmt_ctx->streams[0]->time_base;

AVRational r_frame_rate1 &#61; 60, 2;

AVRational time_base_q &#61; 1, AV_TIME_BASE;

int64_t calc_duration &#61; (double)(AV_TIME_BASE) * (1 / av_q2d(r_frame_rate1));

packet.pts &#61; av_rescale_q(frame_count * calc_duration, time_base_q, time_base);

packet.dts &#61; packet.pts;

packet.duration &#61; av_rescale_q(calc_duration, time_base_q, time_base);

packet.pos &#61; -1;

frame_count&#43;&#43;;

ofmt_ctx->duration &#61; packet.duration * frame_count;

ret &#61; av_interleaved_write_frame(ofmt_ctx, &packet);

if(ret <0)

break;

//写文件尾

av_write_trailer(ofmt_ctx);

return 0;

JNIEXPORT jint JNICALL

Java_com_xiaoxiao_live_LiveActivity_streamerHandle(JNIEnv *env, jobject instance,

jbyteArray data_, jlong timestamp)

jbyte *data &#61; (*env)->GetByteArrayElements(env, data_, NULL);

// TODO

int ret, i, resultCode;

int got_packet &#61; 0;

resultCode &#61; 0;

/**

* 这里就是之前说的NV21转为AV_PIX_FMT_YUV420P这种格式的操作了

*/

memcpy(yuv_frame->data[0], data, y_length);

for (i &#61; 0; i

*(yuv_frame->data[1] &#43; i) &#61; *(data &#43; y_length &#43; i * 2 &#43; 1);

yuv_frame->format &#61; pCodecCtx->pix_fmt;

yuv_frame->width &#61; src_width;

yuv_frame->height &#61; src_height;

//yuv_frame->pts &#61; frame_count;

//yuv_frame->pts &#61; (1.0 / 30) * 90 * frame_count;

yuv_frame->pts &#61; timestamp * 30 / 1000000;

pkt.data &#61; NULL;

pkt.size &#61; 0;

av_init_packet(&pkt);

if (filterInitResult >&#61; 0)

ret &#61; 0;

//向FilterGraph中加入一个AVFrame

ret &#61; av_buffersrc_add_frame(buffersrc_ctx, yuv_frame);

if (ret >&#61; 0)

//从FilterGraph中取出一个AVFrame

ret &#61; av_buffersink_get_frame(buffersink_ctx, new_frame);

if (ret >&#61; 0)

ret &#61; encode(pCodecCtx, &pkt, new_frame, &got_packet);

else

LOGE("Error while getting the filtergraph\\n");

else

LOGE("Error while feeding the filtergraph\\n");

if(filterInitResult <0 || ret <0)

LOGE("encode from yuv data");

/**

* 因为通过filter后&#xff0c;packet的宽高已经改变了&#xff0c;初始化的编码器已经无法使用了&#xff0c;

* 所以要兼容filter无法初始化的话&#xff0c;需要重新初始化一个对应宽高的编码器

*/

//进行编码

//ret &#61; encode(pCodecCtx, &pkt, yuv_frame, &got_packet);

if(ret <0)

resultCode &#61; -1;

LOGE("Encode error\\n");

goto end;

if(got_packet)

LOGI("Encode frame: %d\\tsize:%d\\n", frame_count, pkt.size);

frame_count&#43;&#43;;

pkt.stream_index &#61; out_stream->index;

//将packet中的有效定时字段&#xff08;timestamp/duration&#xff09;从一个time_base转换为另一个time_base

av_packet_rescale_ts(&pkt, pCodecCtx->time_base, out_stream->time_base);

//写PTS/DTS

/*AVRational time_base1 &#61; ofmt_ctx->streams[0]->time_base;

AVRational r_frame_rate1 &#61; 60, 2;

AVRational time_base_q &#61; 1, AV_TIME_BASE;

int64_t calc_duration &#61; (double)(AV_TIME_BASE) * (1 / av_q2d(r_frame_rate1));

pkt.pts &#61; av_rescale_q(frame_count * calc_duration, time_base_q, time_base1);

pkt.dts &#61; pkt.pts;

pkt.duration &#61; av_rescale_q(calc_duration, time_base_q, time_base1);

pkt.pos &#61; -1;

//处理延迟

int64_t pts_time &#61; av_rescale_q(pkt.dts, time_base1, time_base_q);

int64_t now_time &#61; av_gettime() - start_time;

if(pts_time > now_time)

av_usleep(pts_time - now_time);

*/

ret &#61; av_interleaved_write_frame(ofmt_ctx, &pkt);

if(ret <0)

LOGE("Error muxing packet");

resultCode &#61; -1;

goto end;

av_packet_unref(&pkt);

end:

(*env)->ReleaseByteArrayElements(env, data_, data, 0);

return resultCode;

JNIEXPORT jint JNICALL

Java_com_xiaoxiao_live_LiveActivity_streamerInit(JNIEnv *env, jobject instance, jint width,

jint height)

// TODO

int ret &#61; 0;

const char *address &#61; "rtmp://192.168.1.102/oflaDemo/test";

src_width &#61; width;

src_height &#61; height;

//yuv数据格式里面的 y的大小&#xff08;占用的空间&#xff09;

y_length &#61; width * height;

//u/v占用的空间大小

uv_length &#61; y_length / 4;

//设置回调函数&#xff0c;写log

av_log_set_callback(live_log);

//激活所有的功能

av_register_all();

//推流就需要初始化网络协议

avformat_network_init();

//初始化AVFormatContext

avformat_alloc_output_context2(&ofmt_ctx, NULL, "flv", address);

if(!ofmt_ctx)

LOGE("Could not create output context\\n");

return -1;

//寻找编码器&#xff0c;这里用的就是x264的那个编码器了

pCodec &#61; avcodec_find_encoder(AV_CODEC_ID_H264);

if(!pCodec)

LOGE("Can not find encoder!\\n");

return -1;

//初始化编码器的context

pCodecCtx &#61; avcodec_alloc_context3(pCodec);

pCodecCtx->pix_fmt &#61; AV_PIX_FMT_YUV420P; //指定编码格式

pCodecCtx->width &#61; height;

pCodecCtx->height &#61; width;

pCodecCtx->time_base.num &#61; 1;

pCodecCtx->time_base.den &#61; 30;

pCodecCtx->bit_rate &#61; 800000;

pCodecCtx->gop_size &#61; 300;

if(ofmt_ctx->oformat->flags & AVFMT_GLOBALHEADER)

pCodecCtx->flags |&#61; CODEC_FLAG_GLOBAL_HEADER;

pCodecCtx->qmin &#61; 10;

pCodecCtx->qmax &#61; 51;

pCodecCtx->max_b_frames &#61; 3;

AVDictionary *dicParams &#61; NULL;

av_dict_set(&dicParams, "preset", "ultrafast", 0);

av_dict_set(&dicParams, "tune", "zerolatency", 0);

//打开编码器

if(avcodec_open2(pCodecCtx, pCodec, &dicParams) <0)

LOGE("Failed to open encoder!\\n");

return -1;

//新建输出流

out_stream &#61; avformat_new_stream(ofmt_ctx, pCodec);

if(!out_stream)

LOGE("Failed allocation output stream\\n");

return -1;

out_stream->time_base.num &#61; 1;

out_stream->time_base.den &#61; 30;

//复制一份编码器的配置给输出流

avcodec_parameters_from_context(out_stream->codecpar, pCodecCtx);

//打开输出流

ret &#61; avio_open(&ofmt_ctx->pb, address, AVIO_FLAG_WRITE);

if(ret <0)

LOGE("Could not open output URL %s", address);

return -1;

ret &#61; avformat_write_header(ofmt_ctx, NULL);

if(ret <0)

LOGE("Error occurred when open output URL\\n");

return -1;

//初始化一个帧的数据结构&#xff0c;用于编码用

//指定AV_PIX_FMT_YUV420P这种格式的

yuv_frame &#61; av_frame_alloc();

uint8_t *out_buffer &#61; (uint8_t *) av_malloc(av_image_get_buffer_size(pCodecCtx->pix_fmt, src_width, src_height, 1));

av_image_fill_arrays(yuv_frame->data, yuv_frame->linesize, out_buffer, pCodecCtx->pix_fmt, src_width, src_height, 1);

start_time &#61; av_gettime();

/**

* 初始化filter

*/

filterInitResult &#61; init_filters(filter_descr);

if(filterInitResult <0)

LOGE("Filter init error");

return 0;

那么&#xff0c;到这里就可以把上面说的两个问题给解决掉了&#xff0c;但还是有一定的延迟&#xff08;还在找原因&#xff09;&#xff0c;而且当摄像头切换成前摄像头的时候&#xff0c;会发现画面还是颠倒的&#xff0c;因为前摄像头需要顺时针旋转270度才行的&#xff0c;那么这时候就会发现filter在处理这个旋转的时候有点局限性了。因为filter初始化太麻烦了&#xff0c;所以用filter来解决这个直播画面颠倒的问题有点麻烦。所以就需要使用另外的方法来解决这个问题了。

那么就应该在编码前就把预览的数据给旋转过来&#xff0c;为了以后后续的扩展&#xff0c;比如说加上美颜功能这些&#xff0c;那就需要在预览前就要对数据进行修改再预览&#xff0c;那就SurfaceView就无法满足这个要求那就需要需要TextureView或GLSurfaceView了。这两个都可以在预览前拿到数据&#xff0c;再自己绘制出来的&#xff0c;GLSurfaceView功能更加强大&#xff0c;所以就可以使用它了

所以现在还有的问题就是&#xff1a;

这些问题都需要后续解决的&#xff0c;所以下一篇文章就使用GLSurfaceView来代替filter解决直播画面颠倒的问题

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有